InfraInspect

AI voice inspection pipeline for water infrastructure — from 3D desk-based damage planning to hands-free field capture, turning spoken observations into structured, validated damage records.

AI voice inspection pipeline for water infrastructure — from 3D desk-based damage planning to hands-free field capture, turning spoken observations into structured, validated damage records.

The problem worth solving

HydroMapper's InfraCloud platform centralises inspection data, 3D context, and structured damage records for water infrastructure: quay walls, harbours, bridges, hydraulic structures, and underwater assets. The platform worked. The field documentation workflow didn't match the realities of high-volume on-site inspection.

InfraCloud is web-based, not optimised for mobile, and requires substantial manual input for every damage record. In practice: one person inspects, another enters findings manually. Under real field conditions — bad weather, generator noise, poor connectivity, underwater work — the process becomes slow, error-prone, and fragile.

The core challenge was not identifying damages. It was capturing structured inspection data faster, more reliably, and with less manual effort — across two very different working contexts: the office, where damage suspicions are planned and reviewed against 3D models, and the field, where the same person is standing in front of a quay wall in bad weather with no free hand. Bridging those two contexts without disrupting either shaped every decision in this project.

Zero waiting. Record, move, record, move. Processing happens later. The AI never blocks the inspection flow.

An AI pipeline that assists without blocking — and that can be evaluated honestly from the first production submission.

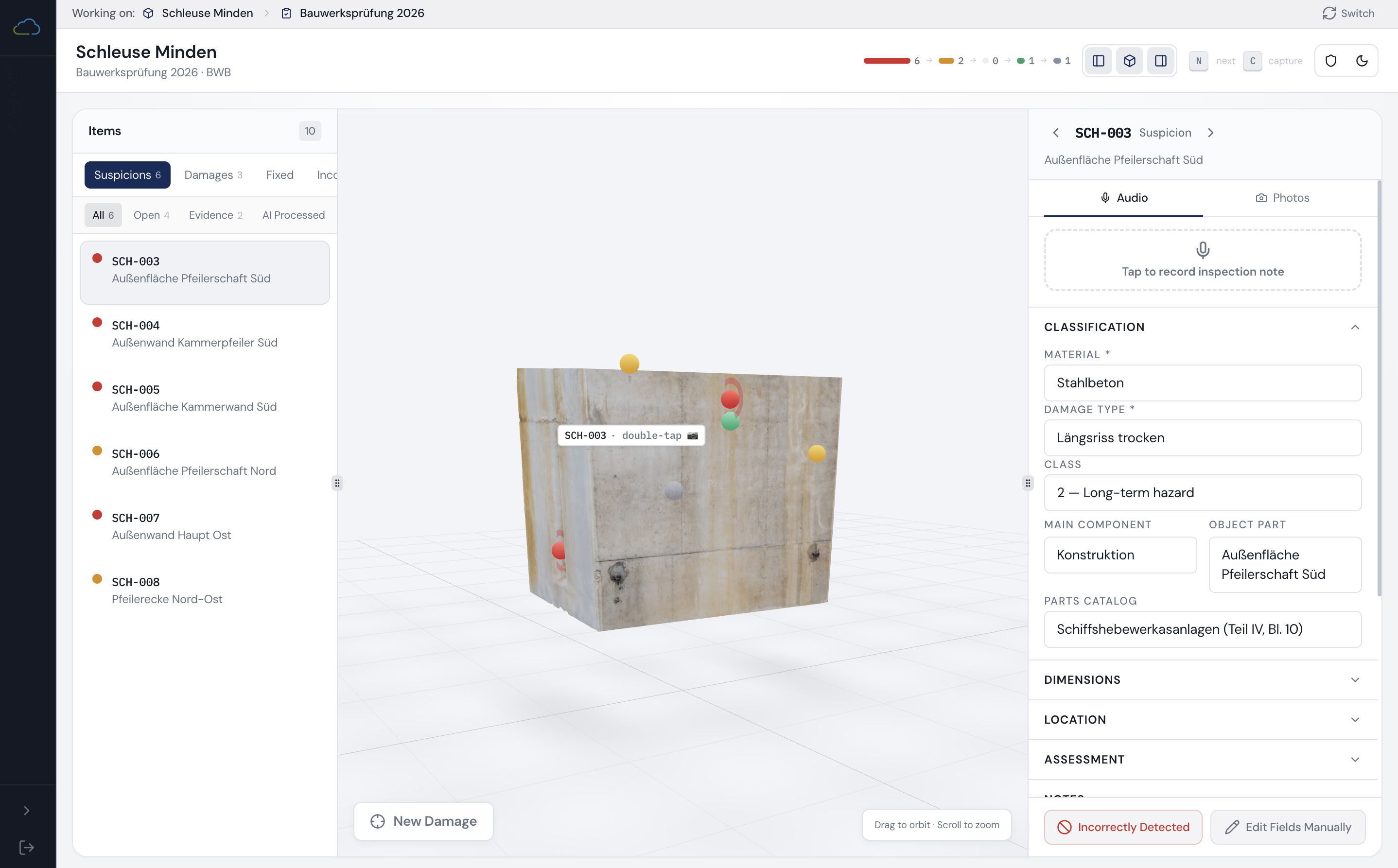

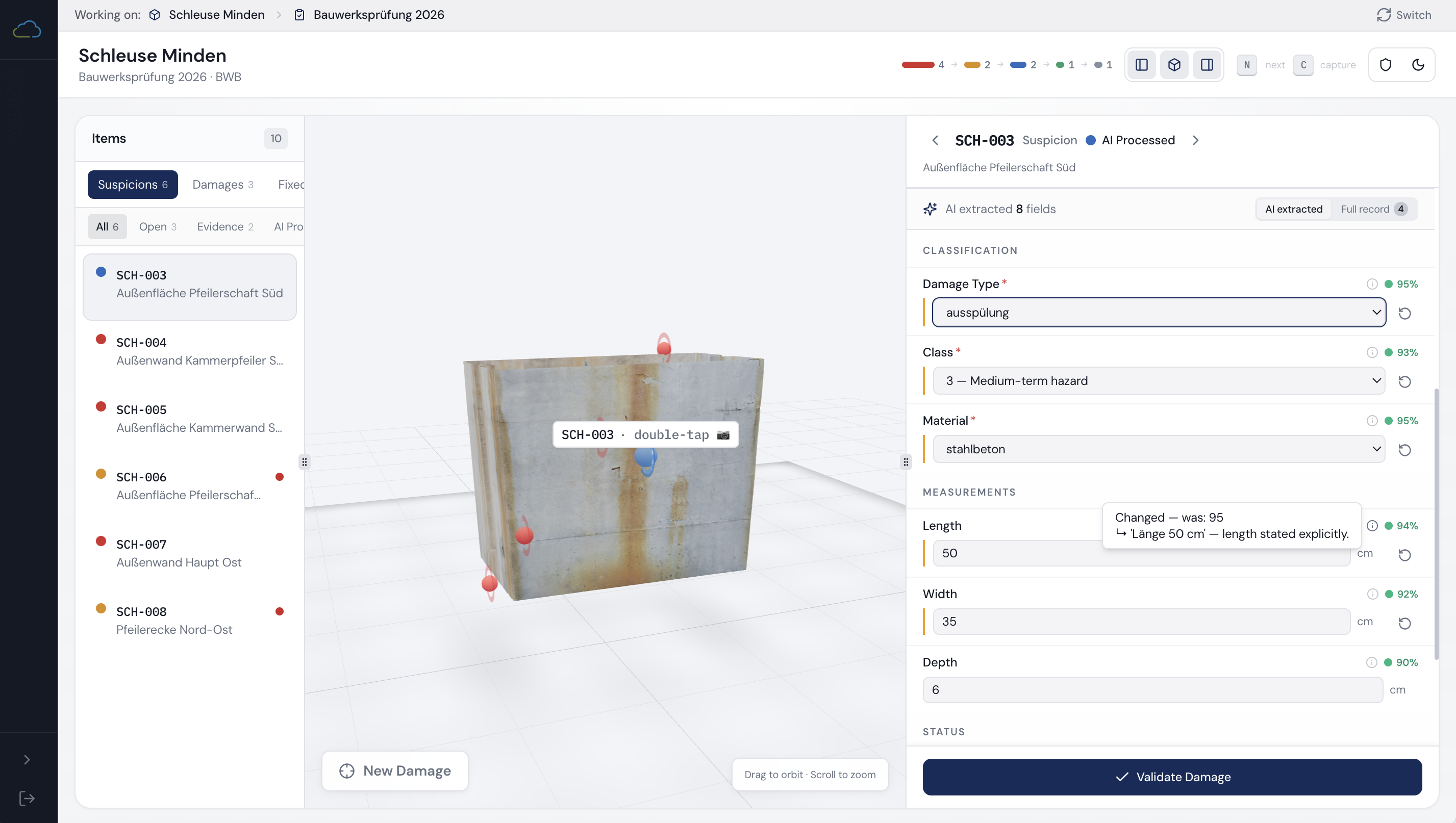

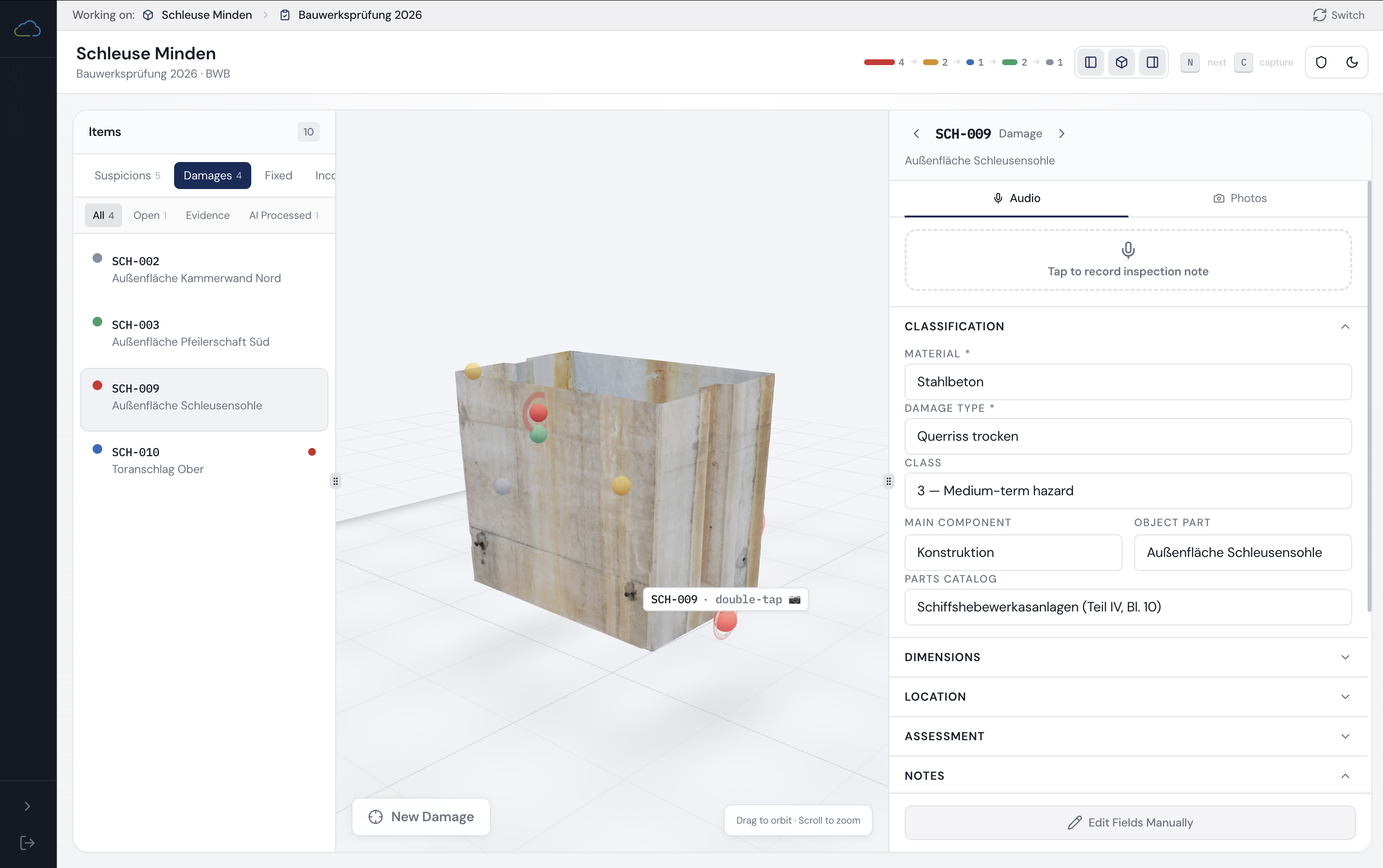

Inspection dashboard — phase-tracked progress across all suspicions

Inspection dashboard — phase-tracked progress across all suspicions

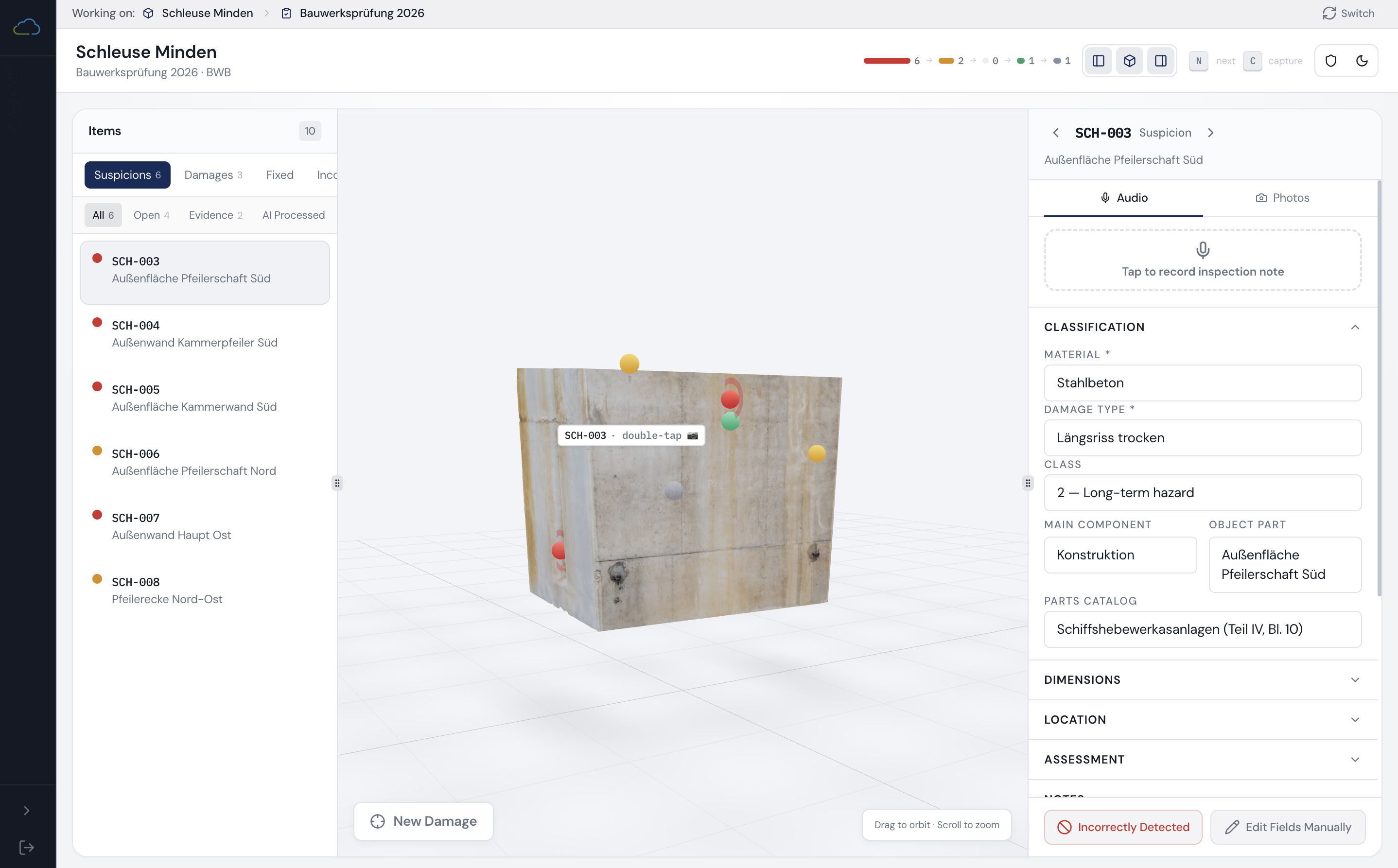

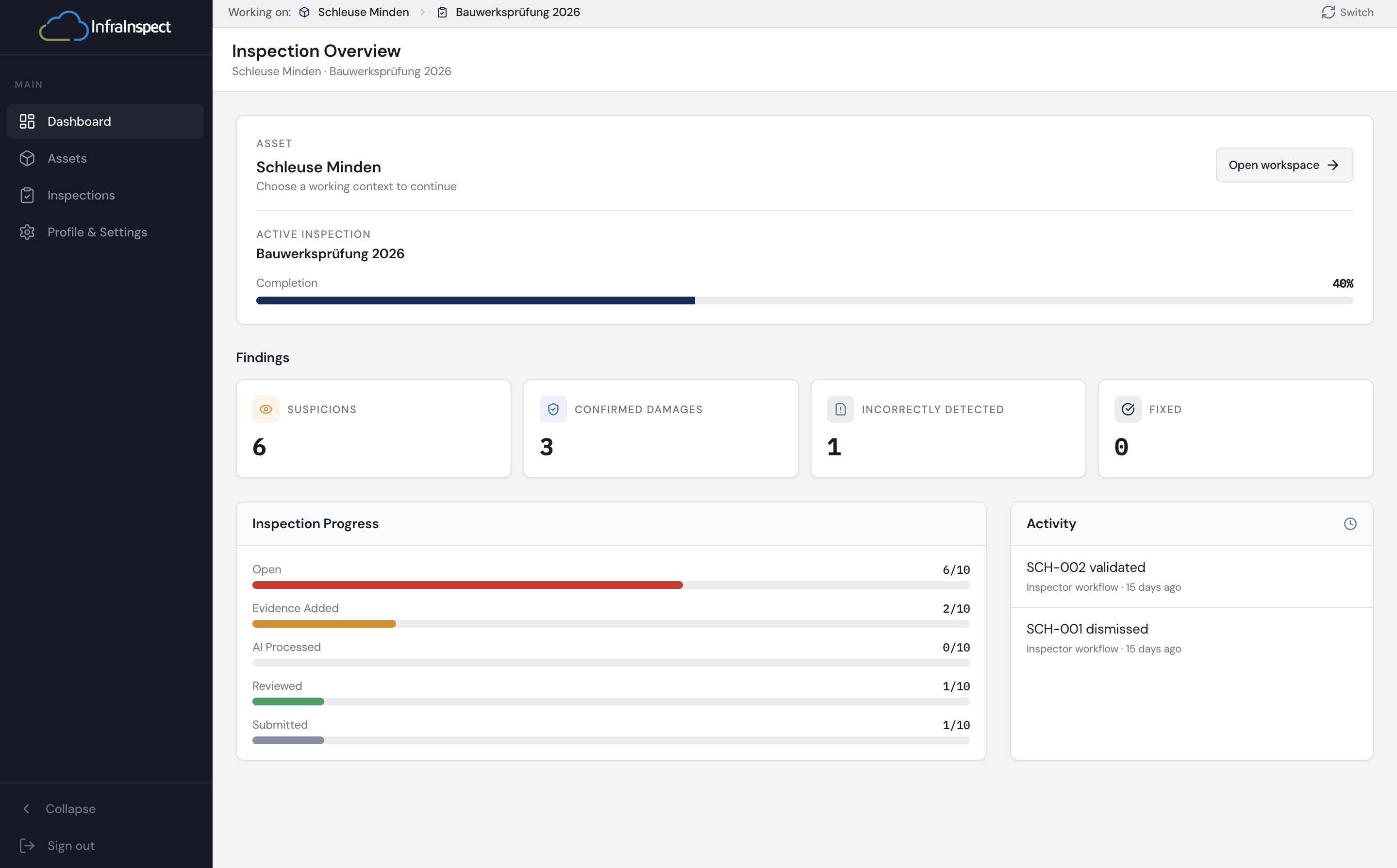

Evidence Added state — audio transcript preview before AI processing

Evidence Added state — audio transcript preview before AI processing

AI Processed — extracted fields with per-field confidence and diff annotation

AI Processed — extracted fields with per-field confidence and diff annotation

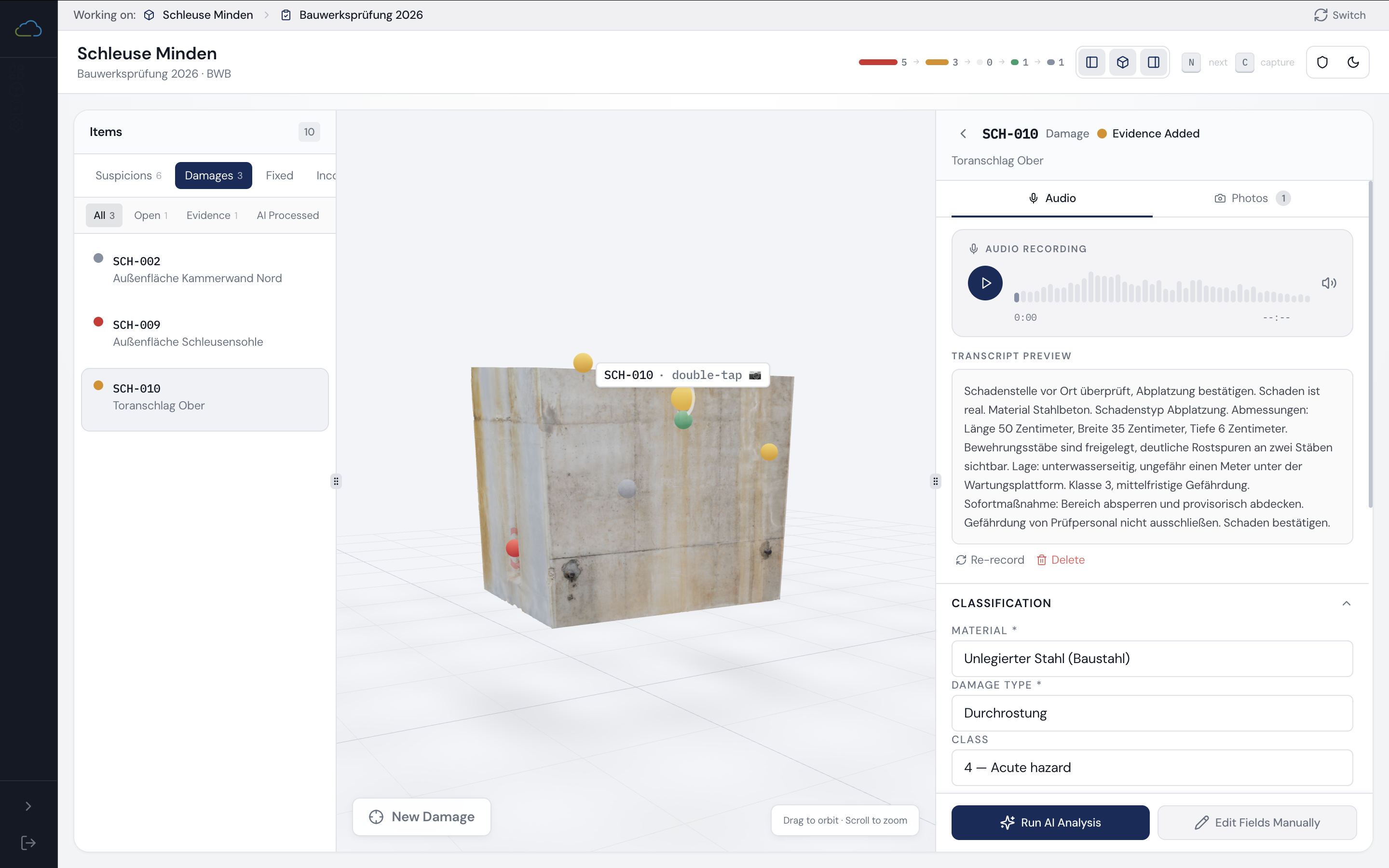

3D model viewer — damage markers colour-coded by pipeline phase

3D model viewer — damage markers colour-coded by pipeline phase

What I decided before building

The most important decision was what not to build. The inspection lifecycle has two phases. InfraInspect targets only Phase 2. That kept the POC deliverable in weeks rather than months and proved value on the highest-impact part of the workflow before adding complexity.

Inspectors work in sequences under time pressure. Any system that introduces waiting destroys the workflow. Capture happens in the field; processing happens when connectivity allows. The only real-time exception is the audio quality check — because a bad recording in the field can't be recovered from in the office.

Submit is never blocked by low confidence — only by missing required fields or invalid cross-field values. A low-confidence suggestion the inspector can see and correct is better than a blocked workflow that creates a different kind of rework. This was a deliberate product decision, not a UX default.

Three measurable outcomes were committed to before any pipeline was drawn: 30% reduction in documentation time, 85% extraction accuracy on core fields, and fewer than 2 corrections per record on average. The evaluation schema was designed alongside the data model, not added after.

Direct InfraCloud API integration in iteration one. Dual operating modes. Offline-first storage. Underwater workflows. Multilingual support. Real-time field assistance. Each exclusion was a product decision backed by a reason, not a casualty of time pressure. The out-of-scope list was as deliberate as the in-scope list.

I authored the DPIA and mapped the system against EU AI Act risk categories before the architecture was finalised. Transcript retention for auditability is confirmed. The final GDPR-compliant approach to audio retention and continuous learning is defined and under review with the client — treated as an open design decision with a known resolution path, not a risk to be addressed post-launch.

How it works

Every damage record moves through six pipeline phases. The complexity was making that state legible and consistent across four surfaces simultaneously: the 3D model markers, the progress UI in the dashboard, the review banner on the damage record, and the back-end job queue.

Fig. 01 — Field capture → AI pipeline → human review → confirmed record

In the field, the inspector's flow is deliberately simple: pick a damage location, record a voice note, attach photos, move on. No waiting, no form-filling, no connectivity required. Processing happens in the background once signal allows.

Behind the scenes, the recording goes through a sequence of checks before anything reaches a reviewer: the audio quality is assessed first — a bad recording in the field can't be recovered from the office — then the speech is transcribed, the intent is classified, the relevant fields are extracted, and everything is validated against the official German water infrastructure damage catalogue before a human ever sees it.

The intent step matters more than it sounds. The system has to decide whether the inspector is confirming a suspected damage, rejecting one, or logging a new one entirely. Getting that wrong isn't a minor inconvenience — it would mean a dismissed damage appearing as confirmed in the record. So that classification is held to a higher standard than any other step in the pipeline.

When the office reviewer opens a record, they see the values the AI extracted from the field recording — each carrying a confidence signal: green for high confidence, amber for uncertain, red for a guess. To interrogate any value, the reviewer hovers over a field and immediately sees both the previous value that was already in the system, and the exact transcript excerpt the AI drew from to arrive at its suggestion. Both on a single hover, without navigating away. The reviewer can accept, edit, or revert any field individually. Nothing gets written to the database until a human signs off.

The 3D model isn't decorative. Every damage marker on the structure changes colour as the record moves through the pipeline — so at a glance, a reviewer knows what's been processed, what's still in progress, and what needs attention, without hunting through a list. Inspectors can tap directly on the model to select a location or log a new damage at the exact point on the structure where they found it.

Outcomes

"Elsa took a workflow that required two people and a lot of manual entry and designed and built the AI pipeline to replace it — complete with compliance documentation and a system that an inspector can actually use in the field."

Reflection

Earlier real audio data. Iteration one deliberately chose the async office review model over real-time field assistance — the right call for scope and speed of value. But the pipeline is calibrated on synthetic TTS-generated German audio. Real field performance under actual inspection conditions is unknown. I would have pushed earlier for a small set of real audio samples before the architecture was finalised. The evaluation framework exists and is ready; data collection is the constraint I'd sequence differently.

Where I underestimated — state coherence across async boundaries. Six pipeline phases (open → evidence_added → extracting → ai_processed → reviewed → submitted) had to stay consistent across the 3D markers, the progress UI, the review banner, and the back-end job queue. Keeping that coherent was more product work than model work — the hidden cost of building real async AI UX. Getting the phase machine right was the hardest engineering and design problem in the project, and I'd have allocated it more time upfront if I could replay the scoping.

What transfers to the next project. The pattern — capture → AI draft → diff review → gated submission → structured audit, with phase-tracked UX and a spatial anchor where it fits — is reusable for any high-stakes workflow where a model drafts on behalf of an expert. Clinical notes, claims triage, KYC review, legal drafting. Same shape, different vocabulary. The structural decisions that matter are the same: what the AI proposes vs. what currently exists, who reviews and when, what the confidence signal means, and what the audit trail needs to contain. The domain changes; the pattern doesn't.