InvestoraAI

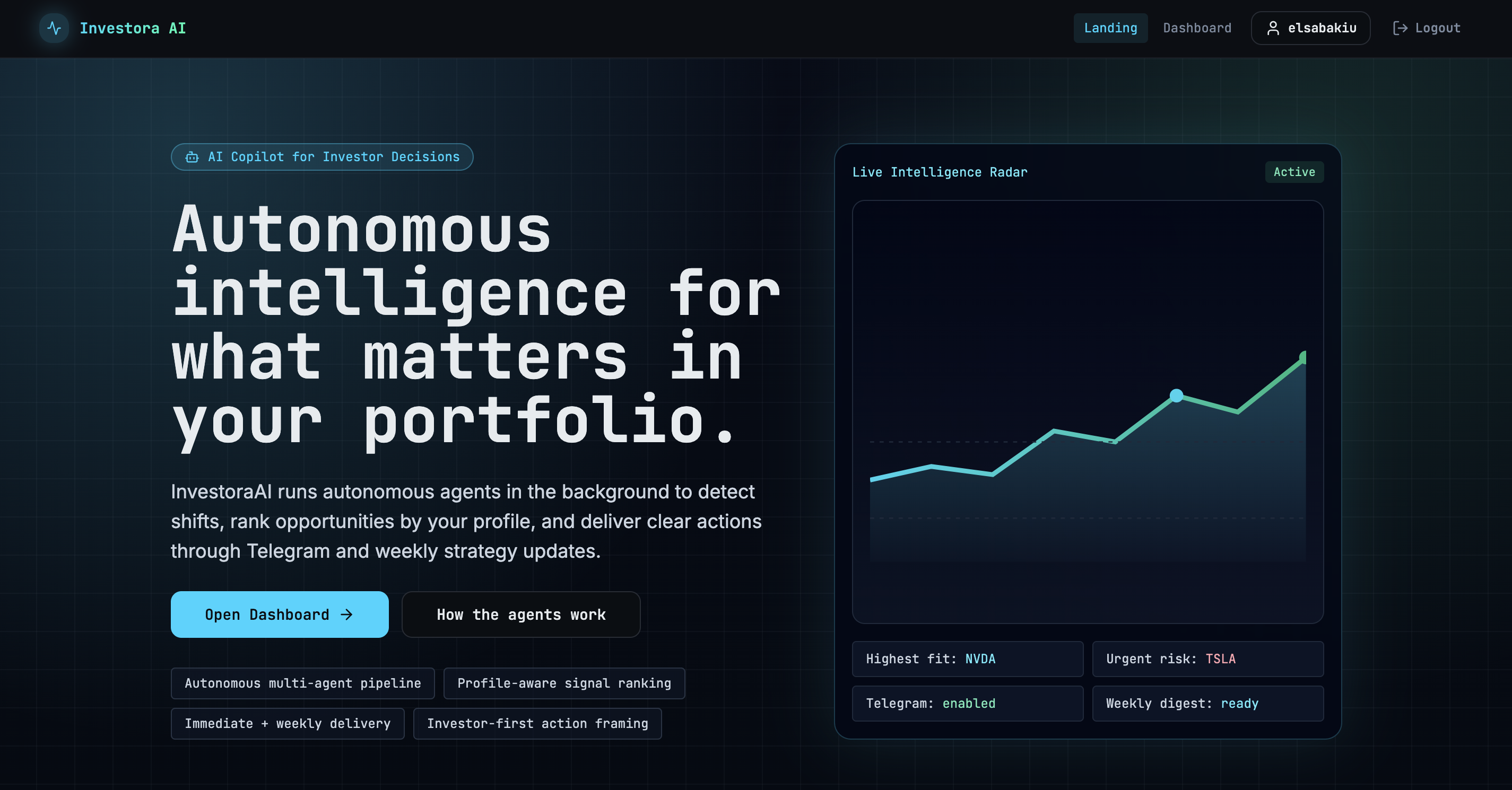

A stock intelligence platform built solo from scratch — designed around one weekly decision rather than a feed of noise.

A stock intelligence platform built solo from scratch — designed around one weekly decision rather than a feed of noise.

The problem worth solving

Individual investors have access to more market data than ever — prices, fundamentals, news, sentiment, analyst ratings — scattered across a dozen tools with no system to connect them. The result is paralysis, not decisions.

Most AI tools in this space fail in one of three ways: they produce analysis without a clear action, they generate plausible-sounding output that isn't grounded in real data, or they claim personalisation while delivering the same output for every user. Impressive in demos. Useless in practice.

Once a week: here's what deserves your attention, why, and how confident the system is. Everything else can wait.

Prove that starting with the decision — not the model's capabilities — produces a better AI product. Every architectural choice follows from that.

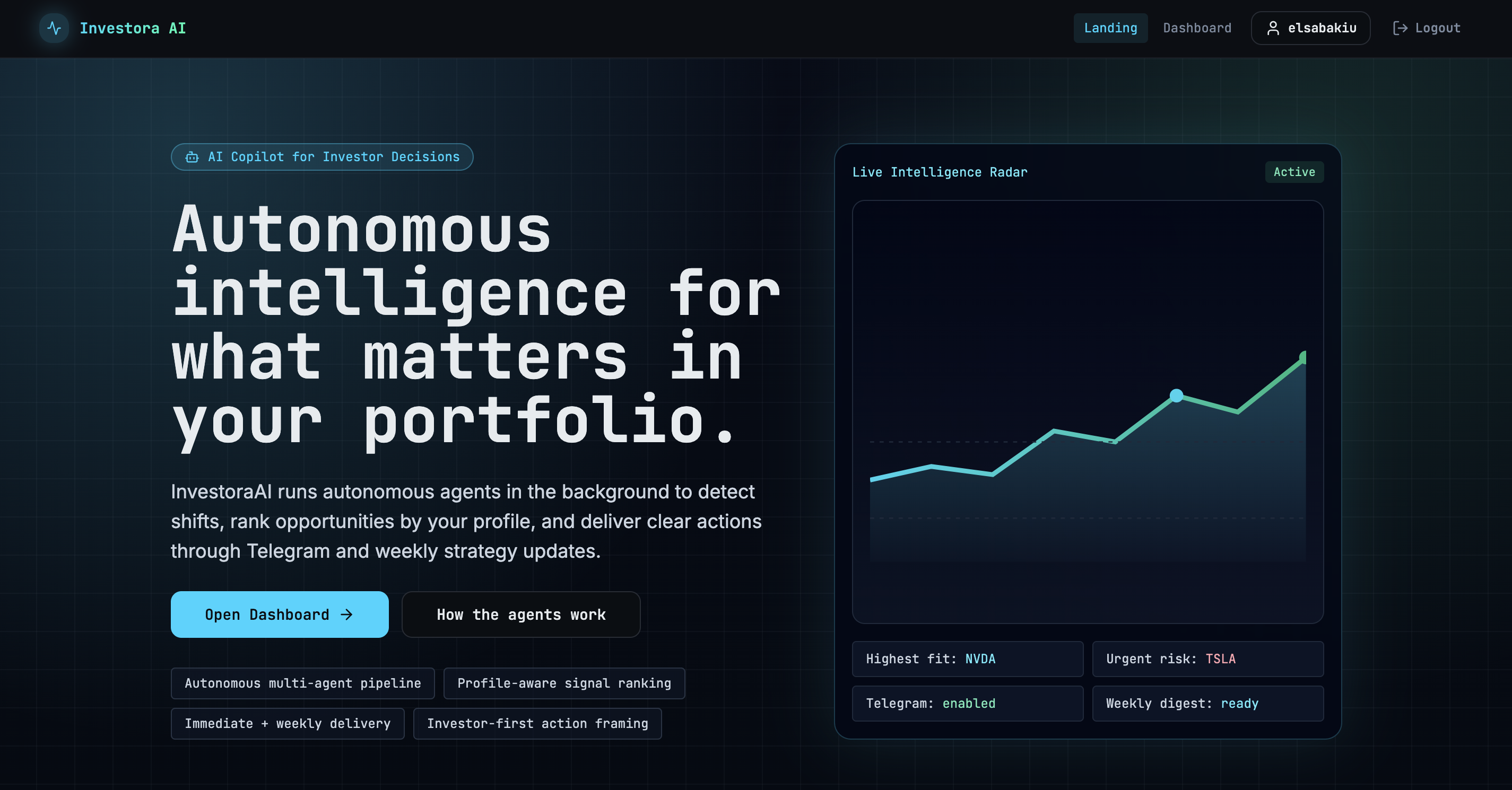

Dashboard — highest-conviction ideas ranked by composite score

Dashboard — highest-conviction ideas ranked by composite score

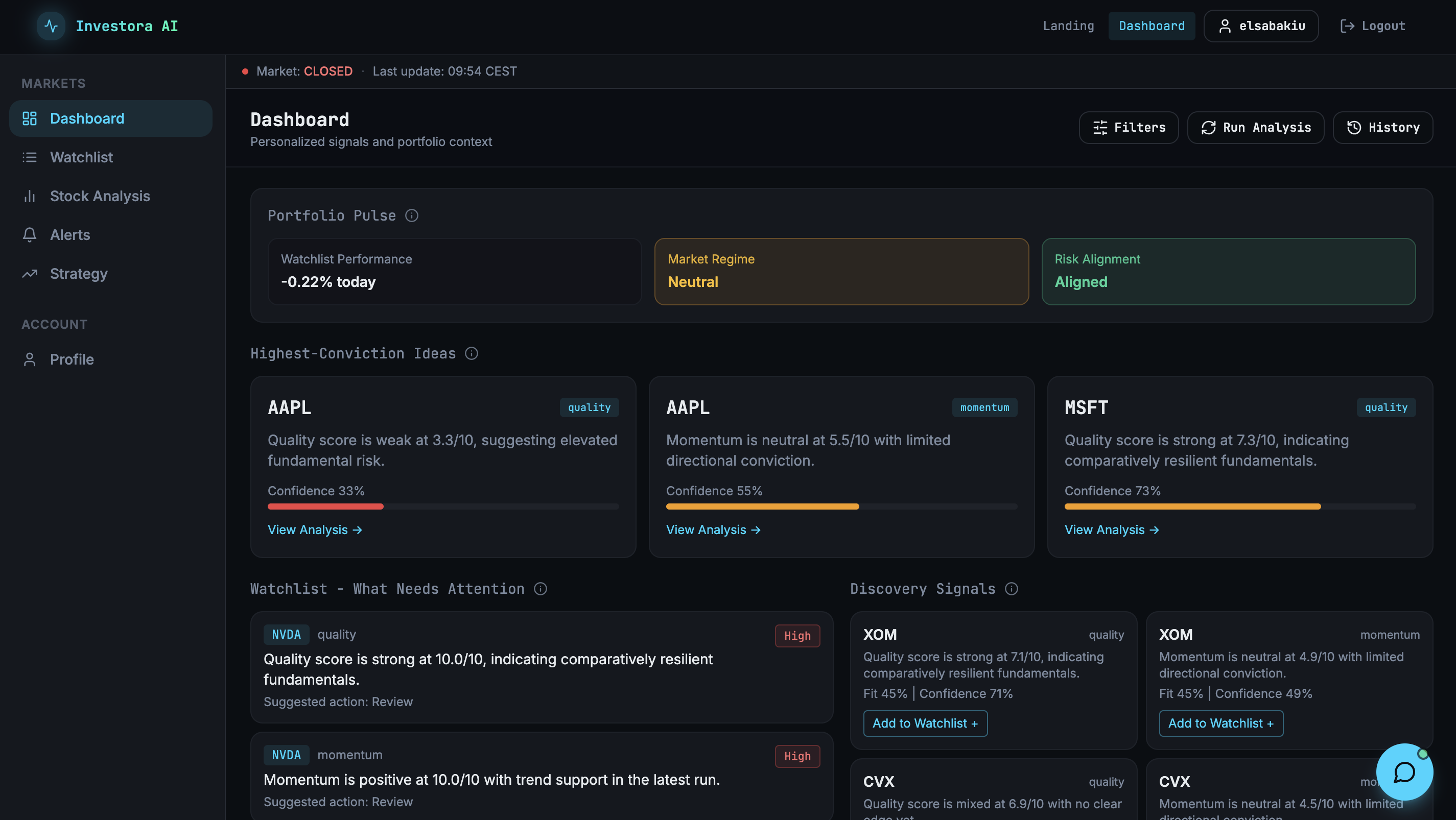

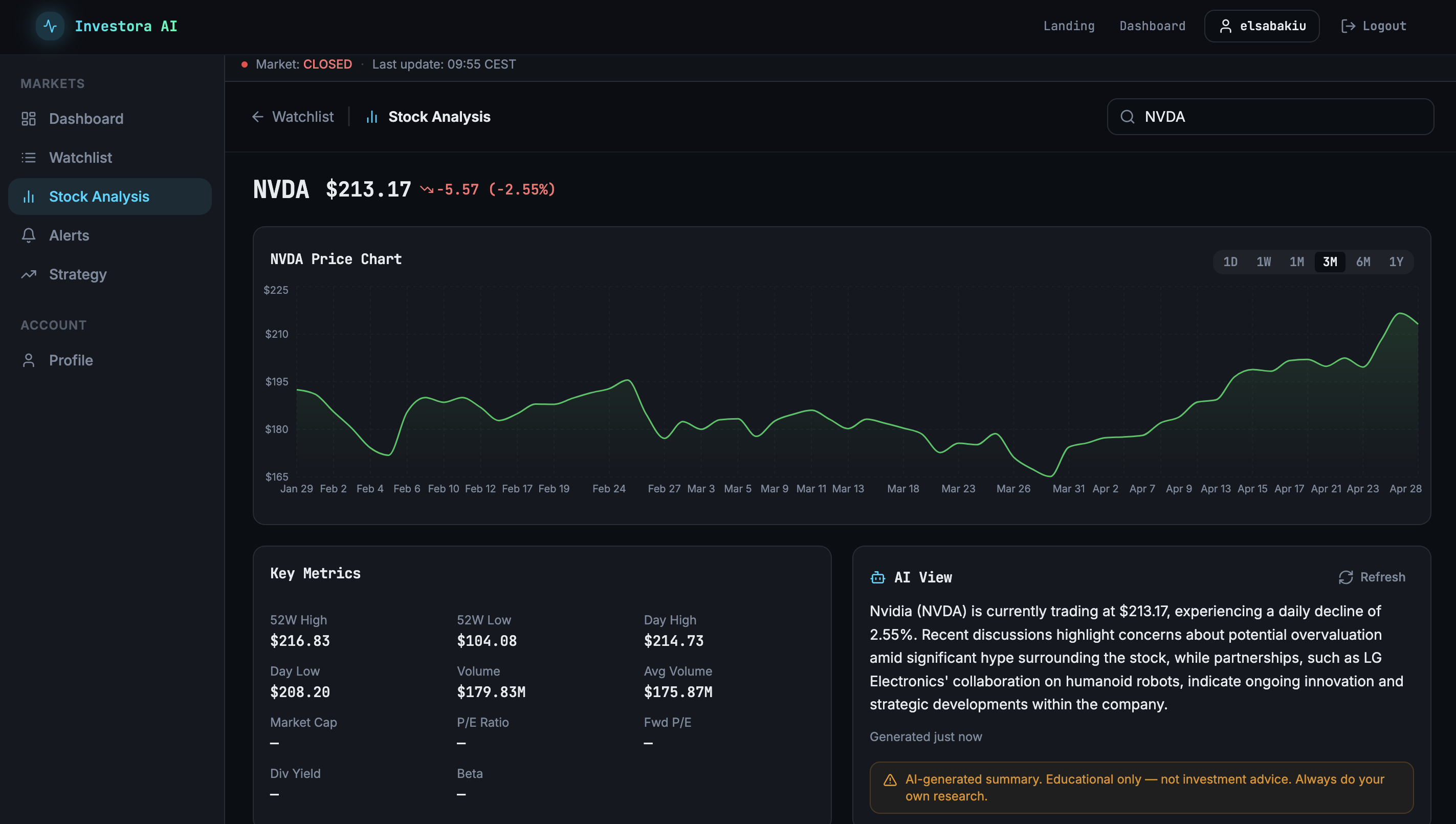

Stock analysis — price chart + AI-synthesised evidence view

Stock analysis — price chart + AI-synthesised evidence view

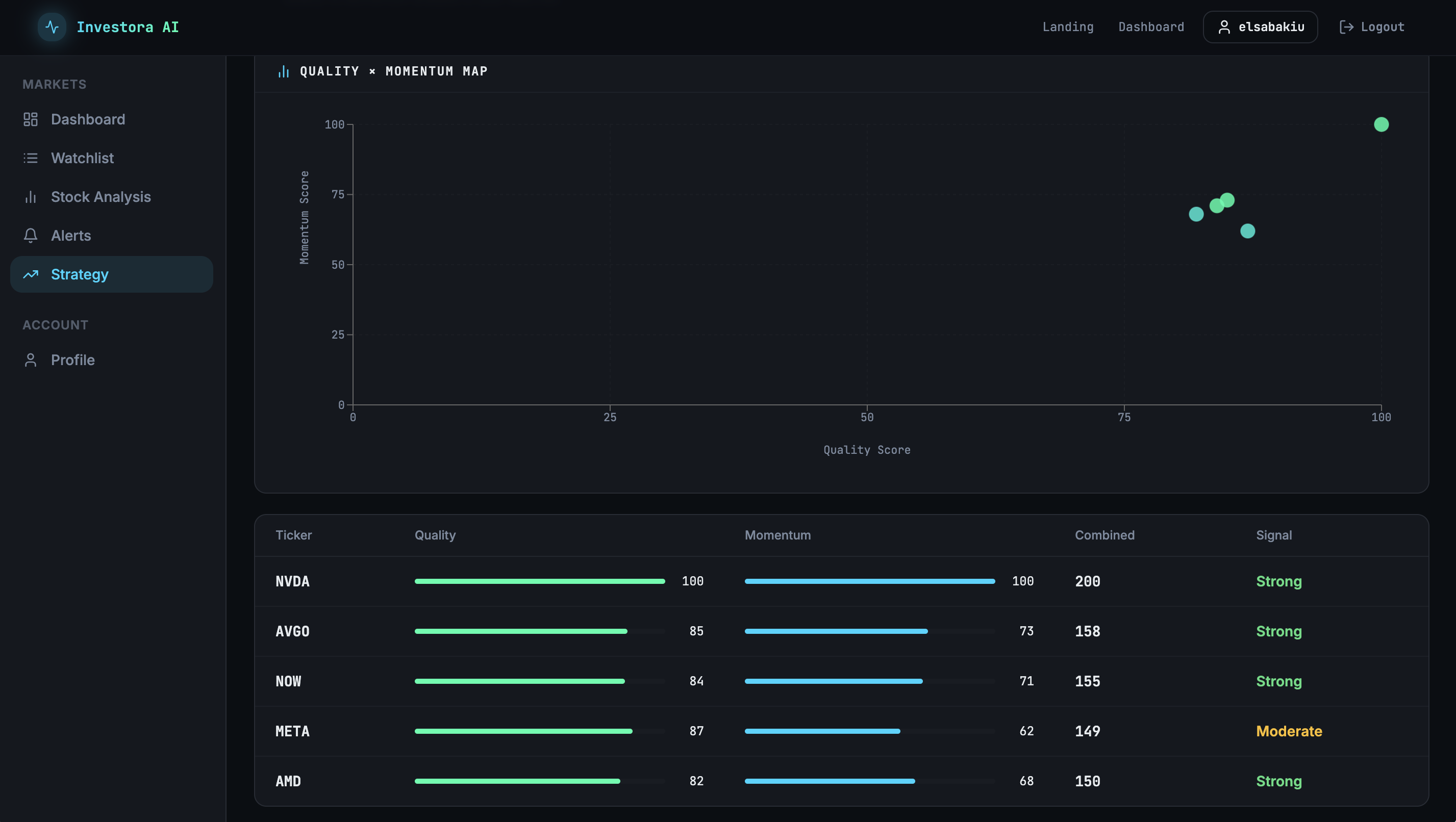

Strategy — quality × momentum map with signal classification

Strategy — quality × momentum map with signal classification

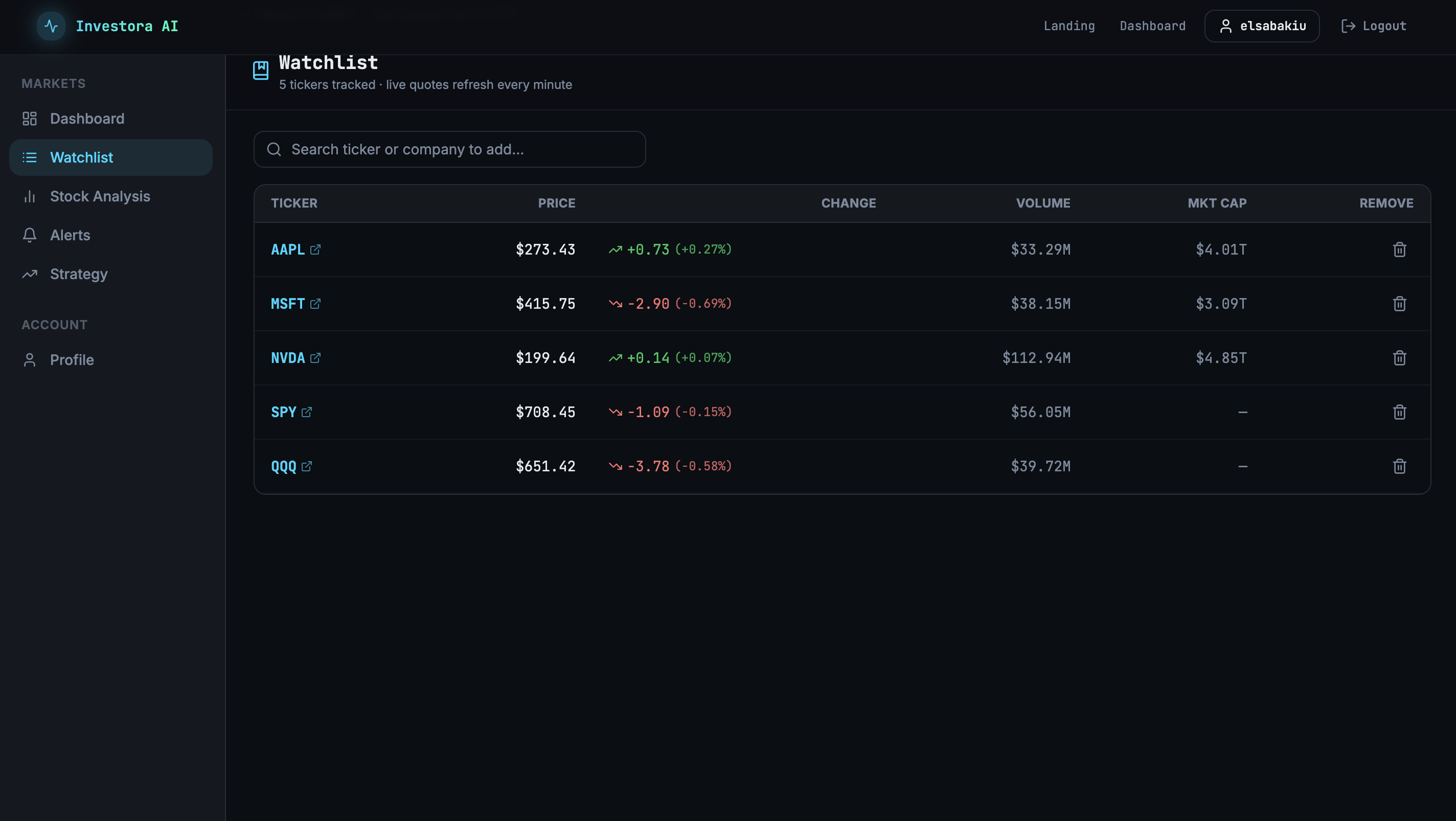

Watchlist — live quotes refreshing every minute

Watchlist — live quotes refreshing every minute

What I decided before building

The first question was not what the AI should do, but what decision it should support. The answer: a weekly prioritisation call on which opportunities deserve attention and why. That single framing drove every subsequent choice — weekly cadence, conviction score over prediction, structured dashboard over chat interface.

Real-time signals optimise for engagement, not decisions. A weekly summary forces the system to identify what actually matters rather than surfacing every movement. It also sidesteps the most common failure mode in financial AI products: alert fatigue that trains users to ignore the output.

The system produces a composite score from traceable, independently sourced inputs — P/E, earnings growth, price momentum, RSI, news sentiment — weighted explicitly (quality 55%, momentum 45%). Stocks fall into three signal bands: Alert, Candidate, Monitor. The weights are product decisions, not model defaults, chosen to favour signal trust over signal volume.

The analysis pipeline emits live progress events to the frontend via SSE so users see the agent working in real time — data collection, scoring, synthesis, delivery. Transparency about process isn't an implementation detail; it's the mechanism that makes the output feel trustworthy rather than a black box.

A chat interface — wrong shape for a prioritisation decision. Real-time alerts in v1 — solved differently by the weekly cadence. Multi-user and advisor mode — sequenced for v2. Portfolio-level P&L tracking — the product is about signals, not portfolio accounting. Each cut had a reason; none was accidental.

Investment performance. The system surfaces signals and supports decisions; it does not make them. Every AI View includes an explicit disclaimer. The product is not a robo-advisor and is designed so it cannot be mistaken for one.

How it works

When a user runs the weekly analysis, an AI agent works through four stages in sequence: it pulls market data from three separate providers with failover handling, scores every ticker in the watchlist using the conviction model, calls GPT-4o-mini to synthesise the evidence into plain-language reasoning, and streams the result live to the dashboard.

The agent shows its work. Each stage emits a progress event — so instead of waiting for a result to appear, the user sees data collection happening, scoring completing, analysis generating. That visibility is what separates the output from a black box.

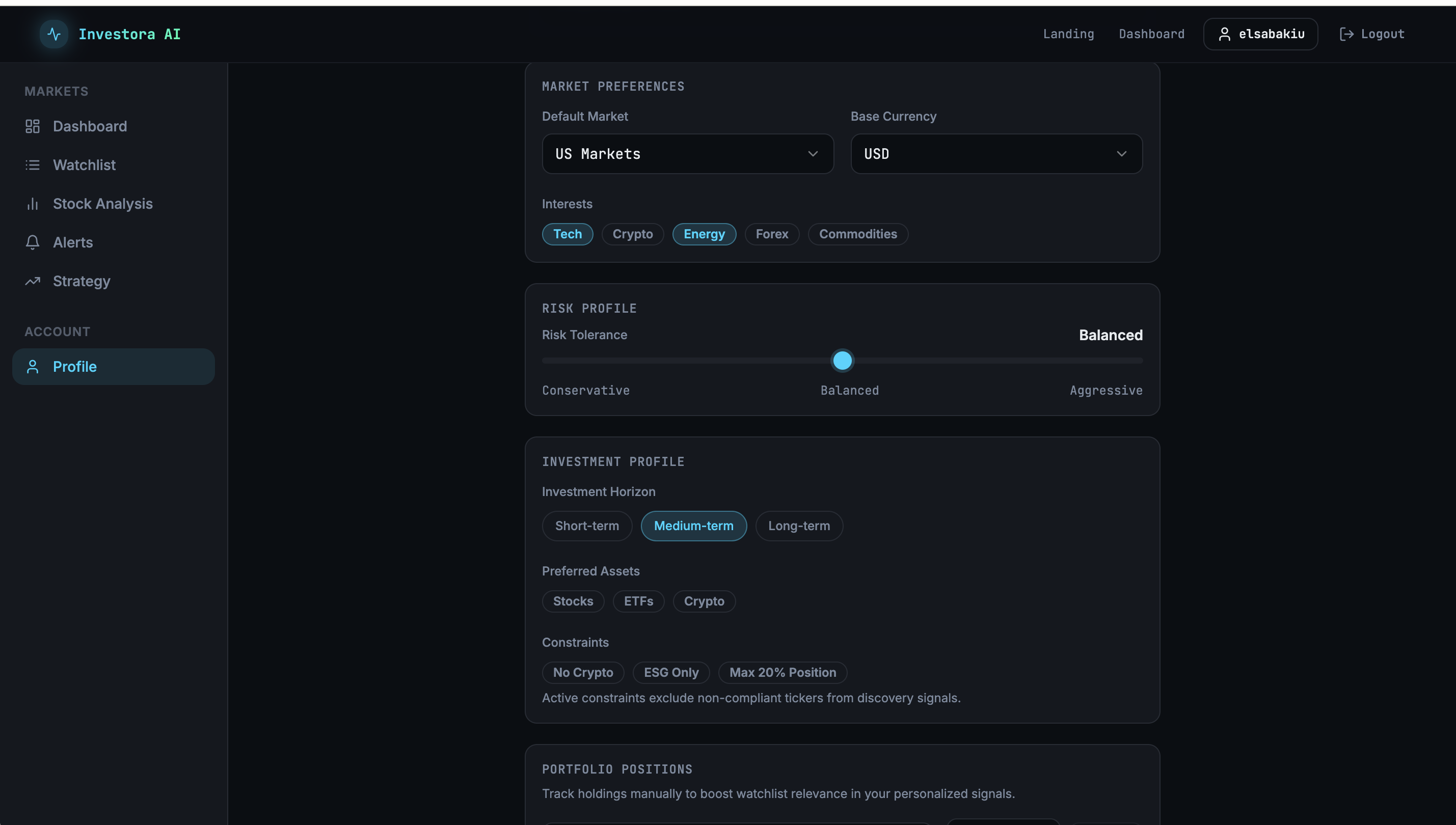

Profile settings shape what surfaces. A user's risk tolerance, investment horizon, preferred asset classes, and position constraints filter the signal output — so two users with different profiles see different priorities from the same underlying data. The personalisation is structural, not cosmetic.

Outcomes

Reflection

Define the scoring model earlier. I refined the conviction bands and weights iteratively through the build. Defining them upfront as explicit product decisions — with documented rationale — would have made every pipeline and UI decision faster. In an AI product, the scoring logic is the product spec. Treat it like one from day one.

SSE streaming changed how the product feels, not just how it performs. I added live progress streaming because the pipeline takes time and a blank screen erodes trust. What I didn't expect: showing the agent's work step by step made the output feel more credible, not just less frustrating. Transparency about process is a trust mechanic. I'd design for it from the start in any AI product with latency.

The decision-first framing is the most transferable thing here. Before writing a single prompt, I asked: what specific decision does this product support, and what does the user need to make it well? That question ruled out a chat interface, a prediction engine, and a data dashboard — all before any code. The pattern works for any AI product where the output is a recommendation rather than a generation: clinical notes, investment signals, supply chain prioritisation, hiring screens. Define the decision. Shape the product around it.